This post is about a recent contribution I’ve done to the i965 mesa driver to improve the emulation of the ETC/EAC texture formats on the Intel Gen 7 and older GPUs, as part of my work for the Igalia‘s graphics team.

Demo:

The video mostly shows the behavior of some GL calls and operations with and without the patches that improve the emulation of the ETC/EAC formats on Gen7 GPUs. The same programs run first with the previous ETC/EAC emulation (upper terminal) and then with the new one (lower terminal).

So let’s see what all this is about:

The ETC and EAC formats are lossy compressed texture formats used mostly in embedded devices. OpenGL implementations of the versions 4.3 and on, and GLES implementations of the versions 3.0 and on must support them in order to be conformant with the standard.

In other words, the OpenGL/GLES implementations after these versions should allow the users to create compressed ETC/EAC texture images by calling the glCompressedTexImage functions, fill sub-images in textures using the glCompressedTexSubImage calls, copy data from ETC/EAC image sub-regions with the glCopySubImageData, read the pixels back from the texture with the glGetCompressedTexImage functions (and from sub-regions of the texture with the glGetCompressedTextureSubImage), generate and display ETC/EAC mipmaps and cubemaps and more.

Some GPUs support the ETC2/EAC images natively. This means that they can sample the ETC2/EAC textures that have been previously generated by either compressing non-compressed pixel data (glTexImage) or by loading already compressed data (glCompressedTexImage) to render the images. Older GPUs, including some Intel Generation 7 (Haswell, Ivy Bridge) and some older ones like the Sandy Bridge lack this native support in the hardware. For these older GPUs to be conformant with the standards, (and for the user programs to not crash on them), the support must be emulated in the software parts of the driver.

On mesa, in the i965 driver, we already had some sort of ETC/EAC emulation: we could read the compressed pixels (glCompressedTexImage) and decompress them to formats that the hardware could sample for the rendering to work. But this was quite problematic as the glGetCompressed.* functions would return decompressed data instead of compressed which the user program couldn’t handle. Also, operations like the copy of sub data or the mipmap generation wouldn’t work either. As a result, we had to abort every mapping of the ETC/EAC textures for reading with assertions, to prevent the user from reading or copying invalid decompressed values and as a result we couldn’t support many GL calls.

Emulation

There are many approaches we could have followed to fix this problem, but we were looking for the less “invasive” one, that would affect the less parts of the driver.

Let’s see a few things about textures on mesa:

First of all a texture might have many levels.

In case of mipmap textures, the driver will use a different level for each image of the mipmap pyramid.

In some occasions, a level might consist of different slices.

In the i965 driver we store the textures as miptrees. The miptree is the struct that contains (or points to) all the information that is needed for the texture mapping to work (that is information about the color, the format, the dimensions, the number of levels and slices, the way the pixels are packed or unpacked, the tiling, the offsets, the usage and more). For the ETC/EAC textures, there was an extra field that was storing the original format of the texture before the decompression took place.

The driver might map the pixels that correspond to a miptree level/slice on a surface (or a buffer) for different purposes. Sometimes the mapping is done for reading: for example when the user requests the pixels back using a Get function, or when he wants to copy a region from the texture (pixels need to be read from source before they are copied to the destination), or when the driver performs an operation that involves reading pixels e.g. a compression or decompression. Some other times the mapping is done for writing, for example when a miptree slice from a level needs to be updated or modified because the corresponding image or a subregion of that image was the destination of a copy or conversion operation.

So far, on Gen7:

At the time that a miptree was about to be created, the ETC/EAC pixels were decompressed to a convenient format that the GPU could sample. So the miptree would be constructed to contain information on how to map the pixels of a decompressed format. With this technique the rendering would work well (see the cubemaps on the video) as the GPU would perform the sampling from the decompressed data. But for several other operations, mapping a level/slice could eventually cause an unexpected behavior to the user program (which as I already said was prevented by aborting the unsupported operation).

At the time that a miptree was about to be created, the ETC/EAC pixels were decompressed to a convenient format that the GPU could sample. So the miptree would be constructed to contain information on how to map the pixels of a decompressed format. With this technique the rendering would work well (see the cubemaps on the video) as the GPU would perform the sampling from the decompressed data. But for several other operations, mapping a level/slice could eventually cause an unexpected behavior to the user program (which as I already said was prevented by aborting the unsupported operation).

With the recent ETC/EAC patch series:

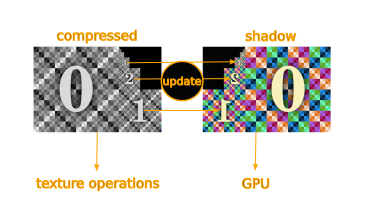

Each miptree of a texture contains a pointer to a generic purpose shadow miptree that can be used (if that’s necessary) to store additional information about formats that the GPU can’t handle in order to emulate them. This idea of the chained shadow trees belongs to Nanley Chery who reviewed the patches: in my first patch series I had used a completely separate miptree and tried to address the problem at the image level.

Anyway, in this approach, no decompression takes place initially. The user creates a compressed texture by calling one of the glCompressedTexImage functions and the driver creates the corresponding miptree to store the information about these compressed pixels.

Every time the user queries back the data using the glGetCompressed.* functions, these compressed data are returned as they are, in the format the user expects.

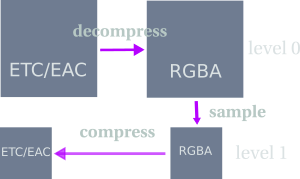

The driver then creates internally an empty shadow miptree, of the decompressed format we were using before, that is chained to the one with the compressed data.

After that, every time that the compressed miptree is modified or filled with new data, the driver flags it in order to update the shadow tree accordingly before rendering. And so, before every draw call that involves compressed ETC/EAC textures on Gen7 GPUs, the driver checks if their miptrees have been flagged, and if so, it decompresses the pixels of their levels/slices filling the corresponding shadow miptrees. Then it sends the shadow miptrees to the GPU.

This way, the GPU will always sample decompressed data, but other operations that involve the original data can still be performed, and as a result more GL calls can be supported. Note that the reason that the update of the shadow trees takes place right before the rendering is to make sure that the texture data are always up to date (which is important after a copy operation for example).

Advantages

- The obvious one: most user programs run well, without crashes as most GL calls are now supported and work with the compressed ETC/EAC data.

- Conformance tests that were previously failing now pass (

KHR-GL46.direct_state_access.textures_compressed_subimagefrom the vk-gl-cts test suite). - We were able to enable the OES_copy_image extension on Gen 7 (147 more vk-gl-cts and piglit tests have passed)

The obvious disadvantage

Compression is used to increase the speed of the texture operations and to decrease the use of resources. In our case, we use the double memory and we update the decompressed mipmaps before the draw call is executed. We know that this is slower. 🙂 But our goal was not to achieve the best performance of a feature on a GPU that lacks native support for the feature. Our goal was to allow the user programs to run on such a GPU without modifications, to pass the conformance tests and avoid any crashes from partially supported read and copy operations. The hardware spec is clear about which formats can be sampled by each GPU’s sampling unit and so, the developers are aware that the feature is not implemented in the hardware.

Example PRM pages that show to what extend each format is supported on each GPU:

- p.88 Sandybridge PRM Vol4_Part1

- Ivybridge:

- VOL2_Part1 section 2.5.11 Format Conversion (vertex fetch).

- VOL4_Part1 section 2.12.2.1.2 Sampler Output Channel Mapping.

- VOL4_Part1 section 3.9.11 Render Target Write.

- for Skylake and on see the Render Target Surface Types

Mesa table:

https://raw.githubusercontent.com/mesa3d/mesa/master/src/intel/isl/isl_format.c (see struct: surface_format_info format_info)

Things that we couldn’t support

One thing that was impossible to support in OpenGL is the automatic mipmap generation, as it requires native or at least software compression. (In case of GLES the automatic mipmap generation is required for non compressed color formats only and so, there’s no need to support it).

There are two ways to generate mipmaps using OpenGL. The first is by loading an image for each mipmap level (with TexImage or CompressedTexImage for each level) as in the example program of the video above (see also example programs below). The second, is to only provide the image for the first level and ask OpenGL to generate images for the following N levels (with one of the non-depricated ways mentioned here).

The implementation of the second method requires to sample each level to generate the next. As we can’t sample the compressed image of the first level (lack of native support), the only alternative hack to do would be to decompress it and sample from the decompressed.

But then, in order to have both the compressed and the decompressed data for the next level for the GetCompressed* and copy functions to work, we would need to re-compress the sampled decompressed data. We decided to not implement this feature as the software compression of ETC/EAC formats is not implemented on mesa and so far not required.

In any case, as the ETC/EAC are quite lossy formats, no sane developer would like to automatically generate the mipmap levels: most of them would just prefer to load the already compressed level images to preserve some of their quality. So I think that the lack of this feature is not really a problem. 🙂

Example programs explained

If you want to test the ETC/EAC support but you don’t want to build the Khronos vk-gl-cts or the piglit test suites, you can checkout these small test programs I used for the video from here: https://github.com/hikiko/test-compression and here: https://github.com/hikiko/cubetest

Let’s see how they work:

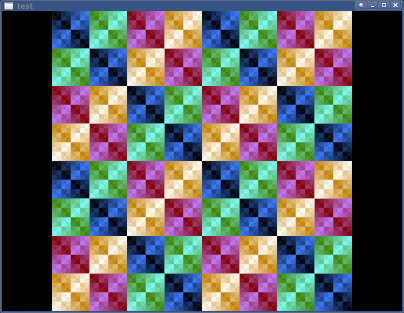

- Mipmap test:

|

1 |

git clone https://github.com/hikiko/test-compression.git |

The mipmap test is loading different dumped mipmap level images and displays them on a window.

Build/run:

|

1 2 |

make ./test compressed/full.tex |

- Tests for the

glGetCompressedTexImagetest,glGetCompressedTextureSubImage2DandglCopyImageSubData:

Build/run:

|

1 2 3 4 5 |

git checkout a76e52b12bf54efdeb95b7f6d8ecfdcec0ab81ae make ./test compressed_texture ./test compressed_texture -subtest ./test compressed_texture -copytest |

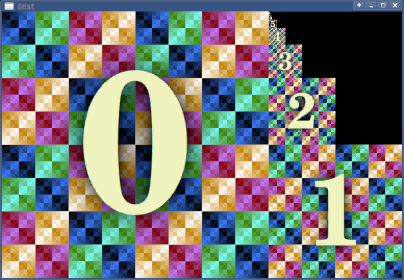

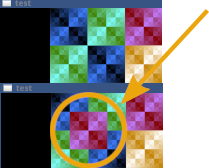

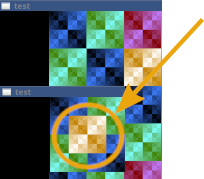

All the above tests load the same dumped compressed texture image (see picture below).

The first test displays it as it is and then reads back the compressed data by calling glGetCompressedTexImage2D to compare them with the submitted ones.

The second test overrides a small region of the texture (upper left corner where you should see a red quad) and reads the compressed pixels of that subregion for comparison. Its purpose is to test that the data returned by the glGetCompressedTextureSubImage2D match those the user sent with the glCompressedTexSubImage2D.

The third test copies a yellow quad region from the texture in its upper left corner, and overwrites a red quad, by calling glCopyImageSubData.

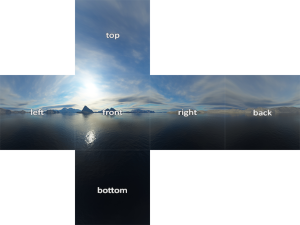

- Cubemap test:

The cubemap test only displays a cubemap. As I already said, the rendering was working before. The test was to make sure that it still works well now that the secondary mipmap tree is sent to the GPU.

Checkout, Build/run:

|

1 2 3 |

git clone https://github.com/hikiko/cubetest.git make ./cubetest |

Note that all programs require the freeglut library (freeglut-dev on Debian/Ubuntu).

Conformance and piglit tests that passed:

On HSW (previously failing): 1 test

|

1 |

KHR-GL46.direct_state_access.textures_compressed_subimage |

On HSW and IVB (previously skipped): 18 tests

|

1 2 3 4 5 |

dEQP-GLES31.functional.texture.border_clamp.formats.compressed_srgb8_alpha8_etc2_eac.* dEQP-GLES31.functional.texture.border_clamp.formats.compressed_srgb8_etc2.* dEQP-GLES31.functional.texture.border_clamp.formats.compressed_srgb8_punchthrough_alpha1_etc2.* |

On HSW, IVB, SNB (previously skipped): 129 tests

|

1 2 3 4 5 6 7 8 9 10 |

dEQP-GLES3.functional.texture.format.compressed.*dEQP-GLES3.functional.texture.wrap.etc2_eac_srgb8_alpha8.*dEQP-GLES3.functional.texture.wrap.etc2_srgb8.* dEQP-GLES3.functional.texture.wrap.etc2_srgb8_punchthrough_alpha1.* piglit.spec.!opengl es 3_0.oes_compressed_etc2_texture-miptree_gles3 (srgb8, srgb8-alpha, srgb8-punchthrough-alpha1) piglit.spec.arb_es3_compatibility.oes_compressed_etc2_texture-miptree (srgb8 compat, srgb8 core, srgb8-alpha8 compat, srgb8-alpha8 core, srgb8-punchthrough-alpha1 compat, srgb8-punchthrough-alpha1 core) |

Total tests passing: 148

(+one awaiting review at the time of writing)

Some acknowledgments

My initial idea to fix the emulation was to do a quick and “simple” hack: store the compressed data in a secondary tree as at the time we were using a tree with decompressed data as main, leave the rest of the code as it is and use flags to decide which one I should map every time that a map takes place. This “quick hack” required changes in the higher level i965 image functions that I managed to avoid in the final solution.

The idea to use a generic purpose secondary miptree to emulate different formats that we can’t sample, came from Nanley Chery who used this type of shadow miptrees in his r8stencil and ASTC formats emulations and who also reviewed my final patch series.

I’ve also received some help from Kenneth Graunke with whom I had discussed the problems I faced in my previous design and who suggested that I enable the OES_copy_image extension after I finish the work on the ETC/EAC support.

Finally, many people from my team (Sam, Alejandro, Iago, Andrés and Juan) made useful remarks during my previous attempts to fix the problem.

Is there a programmatic way to determine which compression type a given hardware supports?

The emulation requires more memory than using an uncompressed format directly (as we have the compressed data *and* the shadow), has some computational overhead, and uses the same bandwidth as uncompressed.

For performance critical stuff, one of the BCn formats would be the better choice then.

Not as far as I know. The extension you would normally use to check if a format is supported (https://www.khronos.org/registry/OpenGL/extensions/ARB/ARB_internalformat_query2.txt) doesn’t make a distinction between the emulated and the natively supported formats for the moment.

Hi!

I know this topic is not brand new. Nevertheless I add here now a comment. 😉

First, many thanks for your GREAT effort in this matter! It would be really nice to know if this principle described here to implement the OES_copy_image extension is limited to DX11 class hardware? So this will work only for DX11 and newer. It is not implementable on DX10 class hardware, even not on ATI/AMD? What is the approximate performance penalty of this emulation?

In the end this may be relevant primarily for Intel GPU hardware and maybe also NVIDIA. Regarding ATI/AMD it looks that their entire DX11 type hardware even down to the Terascale 2 GPU range is supporting this all natively.

And by the way, the OES_copy_image extension is of some interest in the emulation scene. For example, DuckStation is telling me if it is missing but it still works even if it is not present.

I somehow missed this comment and I saw it 2 years later when I was cleaning up some spam… my apologies for the so delayed reply!

So, the i965 driver is an OpenGL intel driver and as a result this hack only works in OpenGL and not DirectX. I am not very familiar with the Windows drivers to know if someone has implemented something similar for DX unfortunately:(