This post is about an experiment I’ve performed to investigate if it’s possible to fill a texture from an ANGLE EGL/GLESv2 context (ANGLE is an EGL/GLESv2 implementation on top of other graphics APIs), and use it from a native (for example mesa3D) EGL/OpenGL context on Linux.

I’ve written a program that is similar to my previous one that was written in native EGL (see this post for more details).

I’ve first created two different contexts, displays, and surfaces, one using the system driver and one using ANGLE. Then I’ve created two x11 windows one for the ANGLE surface and one for the native one. I’ve made the ANGLE window hidden because it was only necessary for the rendering to work.

Then, I’ve generated two textures: one using the ANGLE EGL/GLESv2 and one using the native EGL/GLESv2 (by native I mean the system driver’s EGL, that could be the mesa implementation for example).

I’ve then used the EGL_MESA_image_dma_buf_export extension of the native driver to export the file descriptor of the DMA buffer that was assigned to the native texture, in order to import it into ANGLE (using the EGL_EXT_image_dma_buf_import and EGL_EXT_image_dma_buf_import_modifiers extensions).

After the file descriptor was imported, I’ve used the OES_EGL_image_external extension to assign the DMA buffer to the ANGLE texture, to be its backing storage.

And after that, I could safely use ANGLE to fill the texture and native OpenGL to display it.

Because I wanted to do a similar experiment later on a Browsers related task for Igalia (where I knew that the native implementation would be EGL/OpenGL whereas the ANGLE would be EGL/GLESv2), I’ve forced the use of the OpenGL API in the native driver and the EGL/GLESv2 backend in ANGLE. But in theory, it could work with every EGL/OpenGL, or EGL/GLESv2 implementation that exposes the extensions I’ve used.

Code and necessary tricks for this experiment to work

The concept I’ve just described might seem quite simple but there were a few tricky parts involved. For example: how to make native EGL and ANGLE run together in the same program without problems? As the same functions are implemented by both, it is important to make sure the correct function from the correct library is called every time. But let’s see the program step by step (Note: if you aren’t interested to read all ANGLE setup details you can go directly to Step 3):

Step 1: Using both libraries in the same program

In order to use both ANGLE and native EGL in the same program I’ve decided to link with EGL and dynamically load any EGL function I’d need from ANGLE (prefixed by angle_). So, in my Makefile, I needed to define (

-D ) the ANGLE path for the dynamic loading (

libpath), the library path for the native EGL (

lib, this is where I have installed mesa libraries) and the include path (

inc, where the native EGL header files are installed). So my Makefile contains something like that inside:

|

1 2 3 4 5 6 7 8 9 10 11 |

[...] libpath = /home/eleni/igalia/code/angle/out/Debug lib = -L/home/eleni/igalia/install/lib inc = -I/home/eleni/igalia/install/include CXXFLAGS = -pedantic -Wall -g $(inc) -MMD -DANGLEPATH=\"$(libpath)\" -DANGLE LDFLAGS = $(lib) -lGLESv2 -lEGL -lX11 -ldl $(bin): $(obj) Makefile $(CXX) -o $@ $(obj) $(LDFLAGS) [...] |

And in order to distinguish GL and EGL calls from each driver implementation, I’ve written a function to dynamically load ANGLE EGL and GL bool mygl_init():

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 |

bool mygl_init() { if (!(egl_so = dlopen(ANGLEPATH "/libEGL.so", RTLD_LAZY))) { fprintf(stderr, "Failed to open ANGLE EGL library.\n"); return false; } void *so = egl_so; LOAD_FUNC("eglMakeCurrent", PFNEGLMAKECURRENTPROC, angle_eglMakeCurrent); LOAD_FUNC("eglGetConfigAttrib", PFNEGLGETCONFIGATTRIBPROC, angle_eglGetConfigAttrib); [...] LOAD_FUNC("eglGetPlatformDisplay", PFNEGLGETPLATFORMDISPLAYPROC, angle_eglGetPlatformDisplay); if (!(gl_so = dlopen(ANGLEPATH "/libGLESv2.so", RTLD_LAZY))) { fprintf(stderr, "Failed to open ANGLE GLESv2 library.\n"); return false; } so = gl_so; LOAD_FUNC("glGenBuffers", PFNGLGENBUFFERSPROC, angle_glGenBuffers); LOAD_FUNC("glBindBuffer", PFNGLBINDBUFFERPROC, angle_glBindBuffer); [...] LOAD_FUNC("glEGLImageTargetTexture2DOES", PFNGLEGLIMAGETARGETTEXTURE2DOESPROC, angle_glEGLImageTargetTexture2DOES); return true; } |

(where LOAD_FUNC is a macro that simplifies dlsym, check mygl.h/mygl.cc)

After that, I could call eglMakeCurrent for native EGL and angle_eglMakeCurrent for ANGLE and do the same for all EGL and GL functions. So, then I could start writing the program.

This program’s main function is quite similar to the one we’ve seen in the previous example:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 |

int main(int argc, char **argv) { if (!init()) { fprintf(stderr, "Failed to initialize EGL context.\n"); return 1; } if (!gl_init()) return 1; // event loop for (;;) { XEvent xev; XNextEvent(xdpy, &xev); if (!handle_xevent(&xev)) break; if (redraw_pending) { redraw_pending = false; display(); } } cleanup(); return 0; } |

It uses the same few functions we’ve seen in the previous example: init, gl_init, handle_xevent and display.

Step 2: Creating displays, surfaces, windows, and contexts

Function init contains the code to initialize EGL and ANGLE EGL displays, surfaces, contexts and to create two X windows one visible (native surface) and one hidden (ANGLE surface). It is quite similar to the previous EGL/DMA example‘s init except a few extra parts that were needed to initialize ANGLE platform and context:

In init I’ve made sure ANGLE had been dynamically opened and its functions were loaded by calling the function mygl_init, explained above, and then I made sure ANGLE provides the extensions I’d need (I’ve also included “eglext_angle.h” for that reason).

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 |

static bool init() { if (!mygl_init()) { return false; } const char *client_extensions = angle_eglQueryString(EGL_NO_DISPLAY, EGL_EXTENSIONS); if (!client_extensions) { fprintf(stderr, "ANGLE EGL extensions not found.\n"); return false; } if (!strstr(client_extensions, "ANGLE_platform_angle")) { fprintf(stderr, "ANGLE_platform_angle extension not found.\n"); return false; } [...] |

Next thing was to call egl_init to get access to a native EGL and an ANGLE display. We’ve seen how we access the native EGL display already, so here’s how we can get an ANGLE display, while forcing an EGL/GLESv2 backend:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 |

/* ANGLE */ static const EGLAttrib angle_atts[] = { EGL_PLATFORM_ANGLE_DEVICE_TYPE_ANGLE, EGL_PLATFORM_ANGLE_DEVICE_TYPE_EGL_ANGLE, EGL_PLATFORM_ANGLE_TYPE_ANGLE, EGL_PLATFORM_ANGLE_TYPE_OPENGLES_ANGLE, EGL_NONE, }; printf("ANGLE EGL display\n"); if ((ctx_angle.dpy = angle_eglGetPlatformDisplay(EGL_PLATFORM_ANGLE_ANGLE, (void *)xdpy, angle_atts)) == EGL_NO_DISPLAY) { fprintf(stderr, "Failed to get ANGLE EGL display : error : %s.\n", eglGetError() != EGL_SUCCESS ? "yes" : "no"); return false; } if (!angle_eglInitialize(ctx_angle.dpy, NULL, NULL)) { fprintf(stderr, "Failed to initialize ANGLE EGL.\n"); return false; } angle_eglBindAPI(EGL_OPENGL_ES_API); |

Note that xdpy above is the X11 display, and I’ve used EGL_PLATFORM_ANGLE_ANGLE in the platform field to request an ANGLE display from the EGL and OPENGLES (this is how ANGLE calls GLESv2) backends with the appropriate attributes from the extensions (see angle_atts above)!

Next step was to create the ANGLE and native EGL surfaces and then the contexts. Each surface must be attached to an X11 window or a pixmap. Because in this example I wanted to display the texture contents on screen I used windows. Using pixmaps would be similar just EGL_WINDOW_BIT would be EGL_PIXMAP_BIT.

I’ve set the configuration attributes I’d need to select a visual id for the X11 windows I was going to create. Note that I used a different attribute list for ANGLE because I wanted the ANGLE context to be GLESv2 while the native context is OpenGL:

|

1 2 3 4 5 6 7 8 9 10 11 12 |

const EGLint angle_attr_list[] = { EGL_COLOR_BUFFER_TYPE, EGL_RGB_BUFFER, EGL_RENDERABLE_TYPE, EGL_OPENGL_ES2_BIT, EGL_SURFACE_TYPE, EGL_WINDOW_BIT, EGL_RED_SIZE, 8, EGL_BLUE_SIZE, 8, EGL_GREEN_SIZE, 8, EGL_DEPTH_SIZE, 16, EGL_STENCIL_SIZE, EGL_DONT_CARE, EGL_NONE, }; |

Then I’ve created the X11 windows by calling x_create_window once with the native EGL visual once with the ANGLE one (as I said I need the windows because I can’t render to texture if the surfaces aren’t attached to a window or pixmap) and I only Map (= make visible) the window that corresponds to the native EGL surface, the ANGLE one can stay hidden!

After that I’ve created the surfaces for each window:

|

1 2 3 4 5 6 7 8 9 10 11 |

ctx_es.surf = eglCreateWindowSurface(ctx_es.dpy, ctx_es.config, win, 0); if (ctx_es.surf == EGL_NO_SURFACE) { fprintf(stderr, "Failed to create EGL surface for win.\n"); return false; } ctx_angle.surf = angle_eglCreateWindowSurface(ctx_angle.dpy, ctx_angle.config, hidden_win, 0); if (ctx_angle.surf == EGL_NO_SURFACE) { fprintf(stderr, "Failed to create ANGLE EGL surface for hidden win.\n"); return false; } |

and the contexts:

|

1 2 3 4 5 6 7 8 9 |

/* create EGL context */ if (!egl_create_context(0)) { return false; } /* create ANGLE context */ if (!angle_egl_create_context(0)) { return false; } |

and jumped to the OpenGL/GLESv2 initialization.

Step 3: Initializing OpenGL and GLESv2 structs from each driver, and filling the ANGLE driver texture

Let’s see how the dma_buf buffer was used to share texture data between the two different drivers textures. We are going to analyze the code of gl_init and display functions.

As we’ve already seen, at this moment we have two contexts: a native EGL/OpenGL, ctx_es.ctx (probably ctx_gl would be a better name), and ctx_angle.ctx. Structs ctx_angle and ctx_es store all the information we’d need to render on a window using each driver and are of the form:

|

1 2 3 4 5 6 |

struct EGL_ctx { EGLDisplay dpy; EGLSurface surf; EGLContext ctx; EGLConfig config; }; |

Native context will be the context that draws the textures on screen (that’s why the window to which we’ve attached its surface is visible) and ANGLE context will fill the textures. Which textures? Let’s take a look at some parts of gl_init:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 |

static bool gl_init() { // Context that draws eglMakeCurrent(ctx_es.dpy, ctx_es.surf, ctx_es.surf, ctx_es.ctx); static const float vertices[] = { 1.0, 1.0, 1.0, 0.0, 0.0, 1.0, 0.0, 0.0 }; glGenBuffers(1, &gl_vbo); glBindBuffer(GL_ARRAY_BUFFER, gl_vbo); glBufferData(GL_ARRAY_BUFFER, sizeof vertices, vertices, GL_STATIC_DRAW); gl_prog = create_program_load("data/texmap.vert", "data/texmap.frag"); glGenTextures(1, &gl_tex); glBindTexture(GL_TEXTURE_2D, gl_tex); glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_NEAREST); glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_NEAREST); // system egl we dont fill the image with pixels here! just creating it glTexImage2D(GL_TEXTURE_2D, 0, GL_RGBA, 256, 256, 0, GL_RGBA, GL_UNSIGNED_BYTE, 0); glFlush(); //just in case EGLImage img = eglCreateImage(ctx_es.dpy, ctx_es.ctx, EGL_GL_TEXTURE_2D, (EGLClientBuffer)(uint64_t)gl_tex, 0); assert(img != EGL_NO_IMAGE); PFNEGLEXPORTDMABUFIMAGEQUERYMESAPROC eglExportDMABUFImageQueryMESA = (PFNEGLEXPORTDMABUFIMAGEQUERYMESAPROC)eglGetProcAddress("eglExportDMABUFImageQueryMESA"); PFNEGLEXPORTDMABUFIMAGEMESAPROC eglExportDMABUFImageMESA = (PFNEGLEXPORTDMABUFIMAGEMESAPROC)eglGetProcAddress("eglExportDMABUFImageMESA"); EGLBoolean ret; ret = eglExportDMABUFImageQueryMESA(ctx_es.dpy, img, &gl_dma_info.fourcc, &gl_dma_info.num_planes, &gl_dma_info.modifiers); if (!ret) { fprintf(stderr, "eglExportDMABUFImageQueryMESA failed.\n"); return false; } ret = eglExportDMABUFImageMESA(ctx_es.dpy, img, &gl_tex_dmabuf_fd, &gl_dma_info.stride, &gl_dma_info.offset); if (!ret) { fprintf(stderr, "eglExportDMABUFImageMESA failed.\n"); return false; } [...] |

Here we make current the native driver’s context and we create the vertex buffer and we compile the shaders we are going to use to render a quad (on screen later). Then we generate an empty texture that we are going to map on this quad later and display it on the EGL surface that corresponds to the visible x11 window we’ve created in step 2 of this post.

Then we create an EGLImage from that texture in order to access the DMA buffer that is used as a backing storage for it. We call eglExportDMABUFImageQueryMESA to query some information about this buffer that will be useful when we’ll try to use it from the ANGLE driver. Finally we export the dma buffer file descriptor (as well as the stride and offset that are 0 in this simple example).

Time to initialize the ANGLE structs!

|

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 |

angle_eglMakeCurrent(ctx_angle.dpy, ctx_angle.surf, ctx_angle.surf, ctx_angle.ctx); // xor image unsigned char *pptr = pixels; for (int i = 0; i < 256; i++) { for (int j = 0; j < 256; j++) { int r = (i ^ j); int g = (i ^ j) << 1; int b = (i ^ j) << 2; *pptr++ = r; *pptr++ = g; *pptr++ = b; *pptr++ = 255; } } EGLAttrib atts[] = { // W, H used in TexImage2D above! EGL_WIDTH, 256, EGL_HEIGHT, 256, EGL_LINUX_DRM_FOURCC_EXT, gl_dma_info.fourcc, EGL_DMA_BUF_PLANE0_FD_EXT, gl_tex_dmabuf_fd, EGL_DMA_BUF_PLANE0_OFFSET_EXT, 0, EGL_DMA_BUF_PLANE0_PITCH_EXT, gl_dma_info.stride, EGL_NONE, }; EGLImage angle_img = angle_eglCreateImage(ctx_angle.dpy, EGL_NO_CONTEXT, EGL_LINUX_DMA_BUF_EXT, (EGLClientBuffer)(uint64_t)0, atts); assert(angle_img != EGL_NO_IMAGE); // FIXME. FIXES Until here works the rest needs to be modified angle_glGenTextures(1, &angle_gl_tex); angle_glBindTexture(GL_TEXTURE_2D, angle_gl_tex); angle_glEGLImageTargetTexture2DOES(GL_TEXTURE_2D, angle_img); angle_glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MIN_FILTER, GL_NEAREST); angle_glTexParameteri(GL_TEXTURE_2D, GL_TEXTURE_MAG_FILTER, GL_NEAREST); angle_glTexSubImage2D(GL_TEXTURE_2D, 0, 0, 0, 256, 256, GL_RGBA, GL_UNSIGNED_BYTE, pixels); |

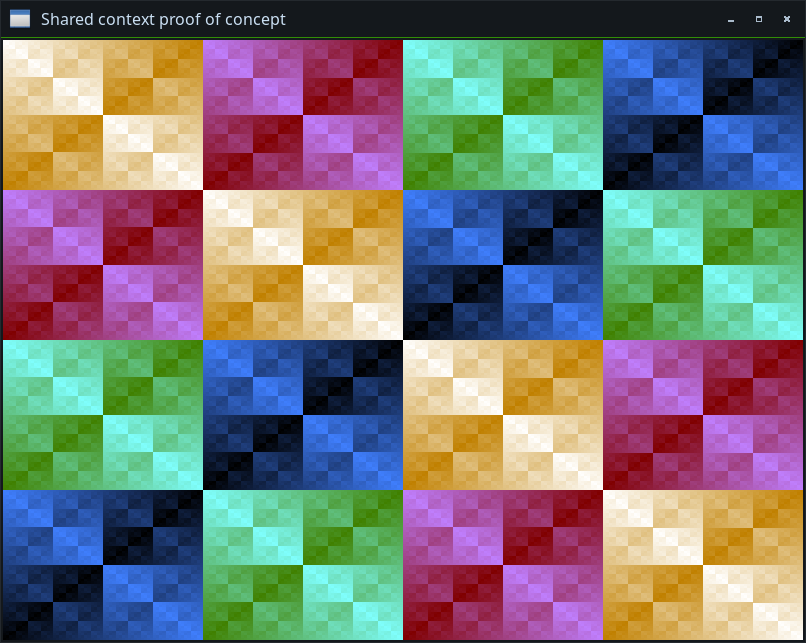

We make ANGLE context current and we generate some pixel data using the same XOR pattern I’ve used in the previous EGL/dma_buf blog post (and in many other examples as it’s easy to generate and contains many colors) using software:

Then, we create an empty image ( angle_eglCreateImage) using the exported file descriptor and the other information we’ve exported from EGL about the dma_buf buffer so that the ANGLE driver knows how to access it.

Next step, is to create a texture from that EGLImage (which will use the same DMA buffer as backing storage) and fill it with the pixels we’ve just generated. We can generate the texture by calling

angle_glEGLImageTargetTexture2DOES(GL_TEXTURE_2D, angle_img); (instead of TexImage2D) and fill it using

angle_glTexSubImage2D.

Step 4: Mapping the texture of the native system driver to see if it was filled with the ANGLE texture data

After that, in display (the drawing function) we can make current the native context again, render a quad using the vertices from the vertex buffer and the shaders, and display the native texture:

|

1 2 3 4 5 6 7 8 9 10 11 12 13 |

eglMakeCurrent(ctx_es.dpy, ctx_es.surf, ctx_es.surf, ctx_es.ctx); glClearColor(1.0, 0.0, 0.0, 1.0); glClear(GL_COLOR_BUFFER_BIT); bind_program(gl_prog); glBindTexture(GL_TEXTURE_2D, gl_tex); glBindBuffer(GL_ARRAY_BUFFER, gl_vbo); glVertexAttribPointer(0, 2, GL_FLOAT, GL_FALSE, 0, 0); glEnableVertexAttribArray(0); glBindBuffer(GL_ARRAY_BUFFER, 0); glDrawArrays(GL_TRIANGLE_STRIP, 0, 4); eglSwapBuffers(ctx_es.dpy, ctx_es.surf); |

What we see on screen is an image filled with the XOR pattern above. When we’ve created the native texture, it was empty. But since it shares the same backing storage with the ANGLE texture, and we’ve filled the ANGLE texture from ANGLE, it is now filled with the ANGLE data. Those data always lived in the same dma_buf buffer, and so no copy was required to transfer them from ANGLE to EGL!

About ANGLE MakeCurrent:

While I was experimenting with this program, I’ve changed the code a bit to fill the texture from display with different patterns each time. And I’ve realized that there’s something we should keep in mind when using it in the same process with another EGL implementation!

It seems that the ANGLE eglMakeCurrent doesn’t actually call MakeCurrent… 😀 In order to increase performance, it stores a pointer to the last current context. If that context is different from the one passed to the next MakeCurrent then it updates the state and calls MakeCurrent, otherwise, it doesn’t do anything. This is a great optimization when ANGLE is the only EGL implementation in the system. But imagine this display function:

|

1 2 3 4 5 6 7 8 9 10 |

void display() { eglMakeCurrent(egl_context, params); // [... code for the native driver ...] angle_eglMakeCurrent(angle_context, other_params); // [... code for the angle driver ...] } |

being called multiple times. For ANGLE, we always call angle_eglMakeCurrent with the same context and as a result nothing is updated after the first time!

The easiest fix for that (in case we need to use ANGLE and system’s driver in the same process) is to invalidate the ANGLE context on top of display by calling something like:

|

1 |

angle_eglMakeCurrent(ctx_angle.dpy, 0, 0, 0); |

and then the next ANGLE MakeCurrent call will actually be forced to call ANGLE’s internal MakeCurrent.

Extending the program

This program could be extended in various ways. We could use different processes for each context and exchange the file descriptor with some sort of interprocess communication (previous post‘s references contain an example that uses unix sockets for this purpose), or to use some other type of struct to attach surfaces (eg wayland buffers instead of x11 windows).

But the mechanism to exchange the dma buffer file descriptor and description in order to reuse it from both drivers would be the same.

And that was all. Please check the links below for the source code, and other references, as well as my previous blog post for more details about reusing DMA buffers with EGL.

Links

Source code:

angle-egl-dmabuf-exchange branch

References:

[1]: Previous EGL/dma_buf example: Using EGL and the dma_buf kernel framework to associate two textures with the contents of the same buffer without copy taking place

[2] Buffer Sharing and Synchronization (The Linux Kernel)

[3] EGL Reference pages

[4] GLESv2 Reference pages

[5] libgbm symbols

[6] EGL_MESA_image_dma_buf_export specification

[7] EGL_EXT_image_dma_buf_import specification

[8] EGL_EXT_image_dma_buf_import_modifiers specification

[9] Generic Buffer Manager in Wikipedia

[10] OES_EGL_image_external specification

[11] linux/master/include/uapi/drm/drm_fourcc.h

Happy Hacking!